The Clock Is Already Running Out on Traditional Product Management

Here is the uncomfortable truth that most career coaches won’t say out loud: the product management skills that earned you your last promotion may not be enough to keep your next job.

This is not a prediction about some distant future. It is happening right now, inside hiring committees at companies ranging from early-stage AI startups to Fortune 500 technology divisions. According to industry hiring data and firsthand accounts from senior engineering leaders, the demand for Product Managers who can navigate probabilistic, AI-driven systems has surged — while the supply of genuinely qualified candidates remains critically thin.

The result is a widening skills gap that is quietly restructuring the entire profession.

What the Job Postings Are Actually Saying

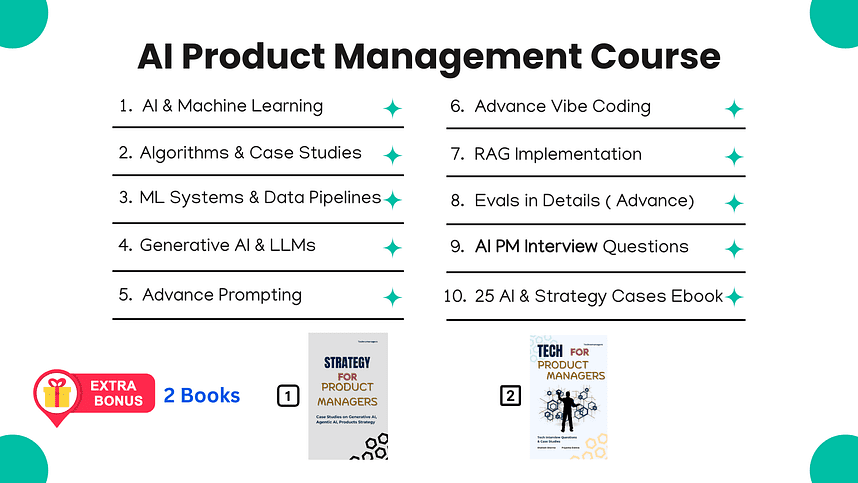

A review of AI-focused product management job listings across LinkedIn, Greenhouse, and Lever reveals a consistent pattern. Employers are no longer satisfied with candidates who can write a clean Product Requirements Document or run a sprint retrospective. They are demanding fluency in concepts like vector databases, retrieval-augmented generation, model evaluation frameworks, and agentic workflow design.

- Roles explicitly titled “AI Product Manager” have increased by over 300% in postings since early 2023, according to labor market analytics platforms tracking technology hiring trends.

- Compensation premiums for AI PM roles average 25–40% above equivalent traditional PM positions at comparable company stages.

- Rejection rates for traditional PMs applying to AI-native roles remain disproportionately high, with hiring managers citing a lack of technical depth as the primary disqualifier.

The market has spoken. The question is whether working product managers are listening — or whether they are waiting for the disruption to arrive before they act.

The Probabilistic Problem: Why AI Products Break Differently

The single most dangerous assumption a traditional product manager can carry into an AI role is that the product lifecycle works the same way it always has. It does not.

In conventional software development, a broken feature is a bug. The code is wrong, the engineer fixes it, and the button works 100% of the time. The relationship between input and output is deterministic and binary.

Artificial intelligence does not operate this way.

Managing Uncertainty as a Core Competency

An AI-powered chatbot might deliver an accurate, helpful response 95% of the time and generate a confident, completely fabricated answer the remaining 5%. A traditional PM instinct is to treat that 5% as a defect to be eliminated. That instinct is wrong — and acting on it reveals a fundamental misunderstanding of how these systems function.

According to practitioners who have led AI product teams at enterprise software companies, the ability to manage, measure, and communicate around probabilistic outputs is not a secondary skill. It is the primary skill. Every downstream decision — from feature scoping to launch criteria to user trust design — flows from a PM’s ability to reason clearly about uncertainty.

Compounding this challenge is the concept industry insiders call the “AI Flywheel.” Unlike traditional software, where the product remains static between updates, AI systems are designed to improve as users interact with them. The data generated by user behavior feeds back into the model, which improves future outputs, which drives more engagement, which generates more data. If a product manager cannot design user interactions that deliberately capture high-quality training signal, the product does not just stagnate — it falls behind competitors whose flywheels are spinning faster.

The Technical Fluency Threshold: You Don’t Need a PhD, But You Need More Than You Have

One of the most persistent myths circulating in product management communities is that AI product leadership requires deep mathematical expertise. It does not. But the opposite assumption — that zero technical knowledge is acceptable — is equally false and significantly more dangerous to a career.

The actual threshold is specific and learnable.

The Conversations You Cannot Afford to Lose

Consider a scenario that plays out in AI product teams every week. An engineering team reports that model accuracy is insufficient for launch. A product manager who lacks technical grounding accepts that assessment at face value and waits. An AI-fluent product manager asks a different set of questions entirely.

- Which accuracy metric is being used, and is it the right one for this use case?

- Is the model overfitting to training data and underperforming on real-world inputs?

- Was the feature selection process appropriate for the prediction task?

- Are we measuring R-squared when we should be measuring something else entirely?

The ability to ask these questions — not to answer them independently, but to ask them with enough precision to redirect a team — is the difference between a PM who leads an AI product and one who is led by it. Understanding the practical application of foundational algorithms like linear regression, logistic regression, and decision trees is not about passing a mathematics examination. It is about knowing which tool belongs in which situation and recognizing when your team has reached for the wrong one.

Generative AI: The Gold Rush Has a Fraud Problem

The generative AI market is experiencing a dynamic that economic historians will likely recognize as a classic speculative bubble formation. Hundreds of products have rushed to market built on little more than a thin API wrapper around a foundation model. The user interface changes. The underlying intelligence does not.

This is not a sustainable competitive position.

Understanding Why the Model Fails

Product managers who understand only how to send a prompt to a large language model are building careers on borrowed time. The market is already beginning to consolidate around teams that can diagnose and solve the deeper problems — hallucination rates, context window limitations, temperature sensitivity, and prompt injection vulnerabilities — rather than simply deploy a pre-built capability.

Understanding the architecture of large language models is not an academic exercise. It is a diagnostic tool. When an enterprise chatbot begins generating confident but factually incorrect responses, the product manager responsible for that system needs to know whether the failure originates in the prompt structure, the temperature setting, the retrieval mechanism, or the underlying model’s training data cutoff. Without that knowledge, the PM cannot lead the remediation. They can only report the symptom and hope someone else solves it.

The Prototype Imperative: Speed Has Become a Competitive Weapon

Perhaps the most consequential behavioral shift separating high-performing AI product managers from their peers is the willingness — and ability — to build.

The traditional product management workflow placed a hard boundary between conception and construction. A PM wrote the specification. An engineer built the thing. The cycle took weeks. In AI product development, that timeline is a liability.

From Jira Tickets to Working Prototypes

Tools like Cursor, GitHub Copilot, and direct API access have fundamentally changed what is possible for a non-engineer with domain knowledge and a clear hypothesis. A product manager who can string together APIs, adjust model parameters, and produce a functional proof-of-concept in an afternoon is operating in a different competitive category than one who cannot.

The downstream effects of this capability are significant. Engineering teams respond differently to a working prototype than to a written specification. The conversation shifts from “is this possible” to “how do we make this production-ready.” Iteration cycles compress. Product hypotheses get tested against reality rather than against assumptions. Features that would have died in a backlog get shipped.

This is not about replacing engineering expertise. It is about compressing the distance between insight and validation.

RAG Systems and Autonomous Agents: The Frontier That Is Already Here

Retrieval-Augmented Generation is not an emerging technology. It is the current architecture underlying the majority of enterprise AI applications being deployed right now. And most product managers cannot explain how it works.

The Enterprise AI Gap No One Is Talking About

The fundamental limitation of off-the-shelf large language models is that they have no access to proprietary organizational data. They know the public internet up to a training cutoff date. They do not know a company’s internal documentation, customer support history, or real-time operational data.

RAG systems solve this by retrieving relevant information from private databases at query time and injecting it into the model’s context before generating a response. Understanding this architecture — including the role of vector databases, chunking strategies, and retrieval ranking — is now baseline knowledge for any PM working on enterprise AI products.

Beyond RAG, autonomous agents represent the next wave of deployment complexity. These are AI systems capable of planning multi-step tasks, using external tools, and executing sequences of actions toward a defined goal without continuous human oversight. The product management challenges introduced by agentic systems — safety guardrails, failure mode design, user trust calibration — are unlike anything in the traditional PM playbook.

The Interview Is a Different Game Now

Technical knowledge means nothing if it cannot be demonstrated under pressure in a structured interview environment. And AI product management interviews are materially harder than their traditional counterparts.

Standard PM interview questions test frameworks and communication. AI PM interviews test applied reasoning under ambiguity. Candidates are asked to design evaluation frameworks for probabilistic systems, define success metrics for foundation models, and architect AI workflows for complex enterprise use cases — in real time, with no reference materials.

The Questions That Separate Candidates

- “How would you measure the success of a large language model deployed at enterprise scale? What are the exact metrics, and why did you choose them over alternatives?”

- “Design an AI evaluation framework for a travel booking agent that handles unstructured user requests across multiple booking systems.”

- “Your RAG system is returning irrelevant documents 20% of the time. Walk me through your diagnostic process and proposed remediation strategy.”

These questions do not have single correct answers. They are designed to reveal whether a candidate can apply technical understanding to messy, real-world product problems — or whether their AI fluency is purely theoretical.

The skills gap in AI product management is not closing on its own. Companies are not lowering their standards while they wait for the talent market to catch up. They are hiring the small percentage of candidates who have already done the work — and they are paying a significant premium to do so.

The question that remains unanswered is not whether the transition to AI-native product management is necessary. The evidence on that point is unambiguous. The question is how many working product managers will recognize the urgency before the window closes — and how many will still be writing traditional PRDs when the market stops asking for them.